Veterinary AI for X-Rays

There’s buzz in the veterinary world about a paper published in March 2026 where researchers tested the accuracy of veterinary AI for x-rays. The TL/DR conclusion? Of the 6 veterinary AI tools assessed, “…none appeared suitable for clinical use in their current form.” The makers of the AI platforms say that the tools continuously (and exponentially) improve, so tests done 18 months ago don’t reflect today’s reality. Fair point, maybe, but let’s look at what the AI tools saw and what they ignored or misdiagnosed. See for yourself the actual x-rays that 3 of the 6 AI tools botched in spectacular fashion.

Veterinary AI for X-rays Study Method

It’s a small study, which is a limitation. Researchers submitted 53 retrospective cases in dogs that featured abdominal x-rays. After the AI platforms rejected some of the x-ray images, the study centered on 307 x-ray evaluations for the selected cases.

Actual human radiologists reviewed the cases and clustered them into 16 groups, based on definitive diagnostics. The groups included things like …

- Small intestine obstructions

- Gastric dilatation volvulus (bloat)

- Bladder stones

- Enlarged spleen

- Enlarged liver

Images to Veterinary AI for X-ray Platforms

The paper describes the x-ray submission process as follows.

“Each case was submitted to 6 AI platforms between September and December 2024: Picoxia (FAS), Radi-

mal, RapidRead (Antech Imaging Services), SignalRAY (SignalPET), Vetology AI (Vetology Innovations), and X Caliber Vet AI (SK Telecom). To prevent bias, the AI platforms were not informed that cases were being submitted for research and all submissions were made under standard, paid subscription accounts. After submission, each AI platform generated binary outputs (yes/no) for the presence or absence of these specific labels, together comprising the full AI-generated report.”

The Results

The researchers write that “…the AI platform algorithms generally identified abnormal cases but failed to identify normal cases.”

For several reasons, they felt their balanced accuracy calculation (rather than raw accuracy data) provide “better representation of their performance (60% to 69%).”

That means a good chunk of the time, the tools were wrong in a variety of ways:

- Missing abnormal diagnoses

- Labeling normal x-rays as abnormal

- Wrongly diagnosing cases

What Everyone is Talking About

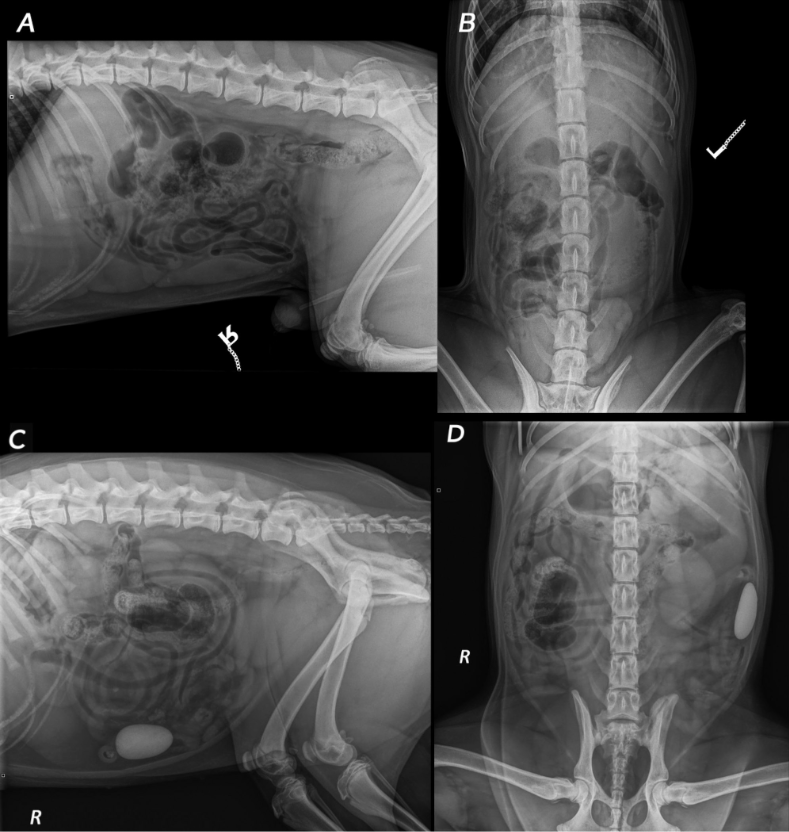

The case that’s causing the buzz in vet med featured a round stone clearly visible in the small intestines.

- 2 of the veterinary AI tools labeled the x-rays normal

- 1 of the tools did label it as abnormal, but then generated 5 inaccurate diagnoses

The researchers clearly note that a foreign body like that in the intestines is considered “critical” and something “warranting major intervention.” So, that’s a big error.

See for yourself.

Study Conclusions

The paper’s conclusion says … “Diagnostic performance varied between the 6 AI platforms tested and was overall low to moderate for this small sample. Even the best-performing algorithm had notable limitations, and none appeared suitable for clinical use in their current form.”

For now? Even if my own veterinary team is using AI tools to help them — such as with Clover’s recent issues with pneumonia, a small area of collapsed lung, and severe arthritis in her spine — I’d really like their own expert eyes figuring out what’s what.